TL;DR

- No single model wins; route to Sonnet 4 or GPT-5 mini for 80% of calls.

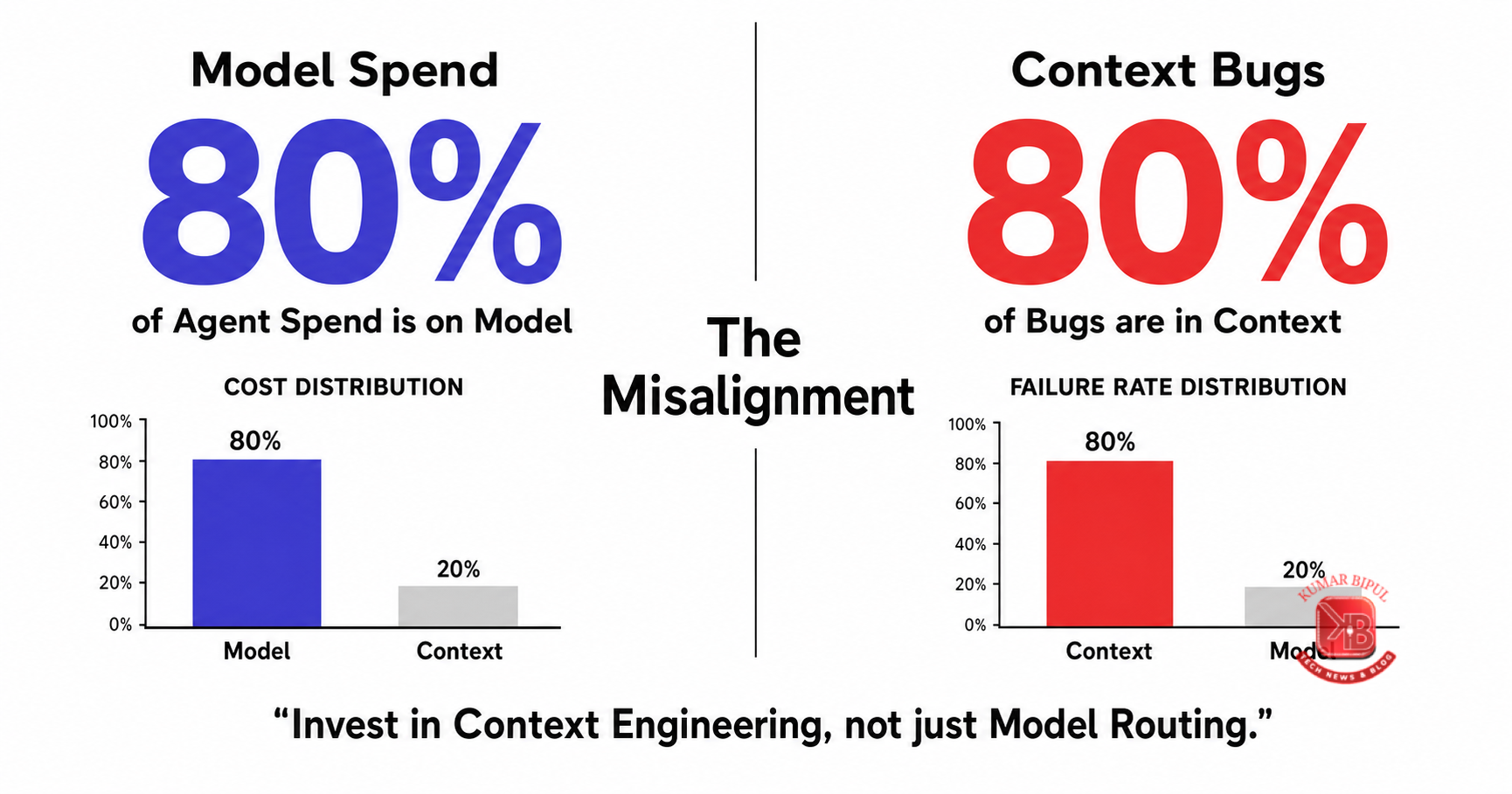

- Context engineering replaces prompt engineering as the dominant 2026 frame.

- Evaluation is the only non-negotiable cross-cutting layer in production.

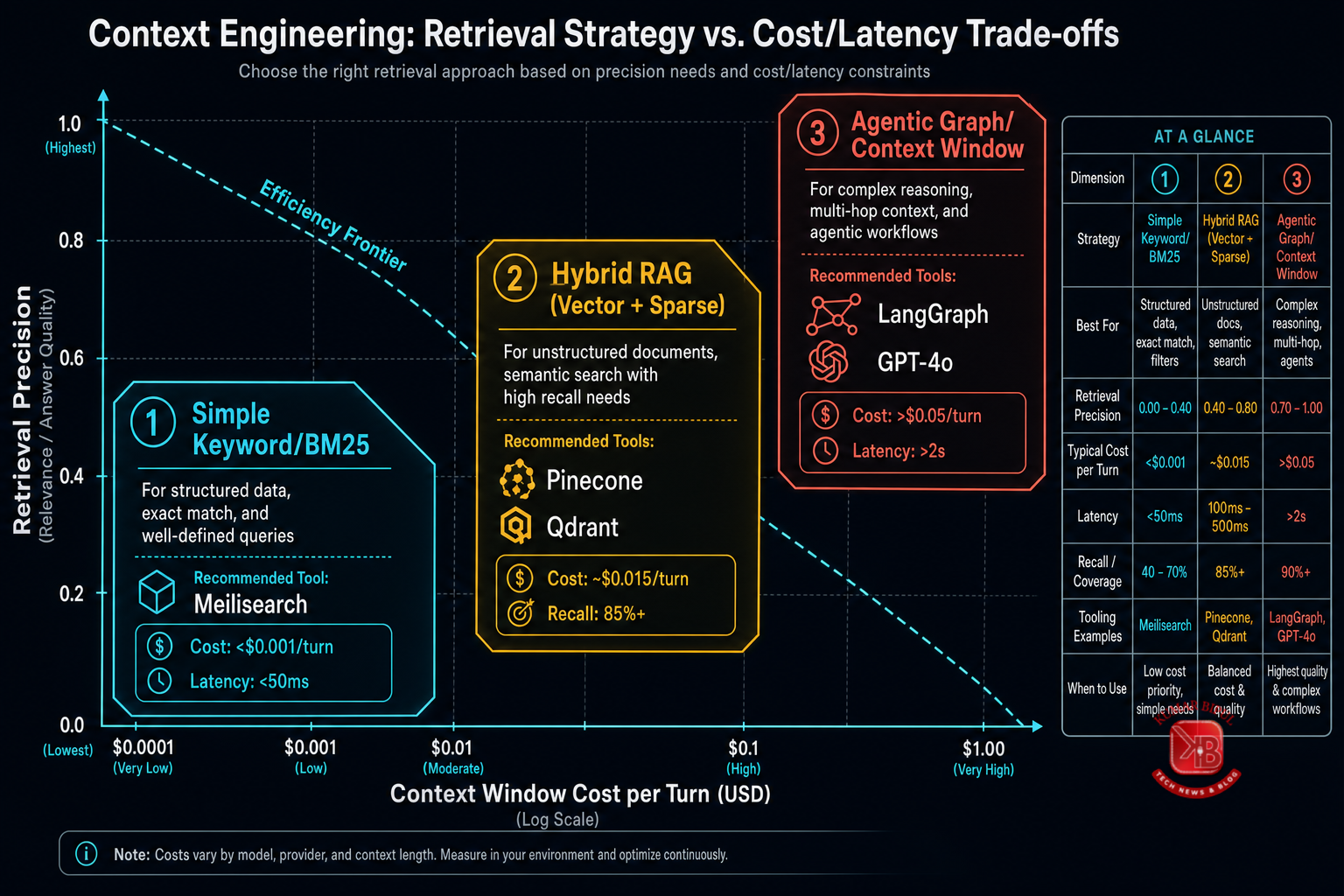

- Start with pgvector; escalate to Pinecone only when scale demands it.

- Don't pick a framework before shipping one agent in raw SDKs.

Overview

The 2026 AI agent stack is no longer about finding the 'best' LLM. It's about building a resilient, observable system that routes tasks to the right model, manages context efficiently, and evaluates every decision. This kit maps the 8 layers plus the critical eval layer, providing the decision rules and tool comparisons used by teams shipping production agents today.

You will walk away with a printable reference, a diagnostic scorecard to audit your current architecture, and a decision matrix to stop guessing which vector DB or gateway fits your scale. No fluff, just the map.

What's inside

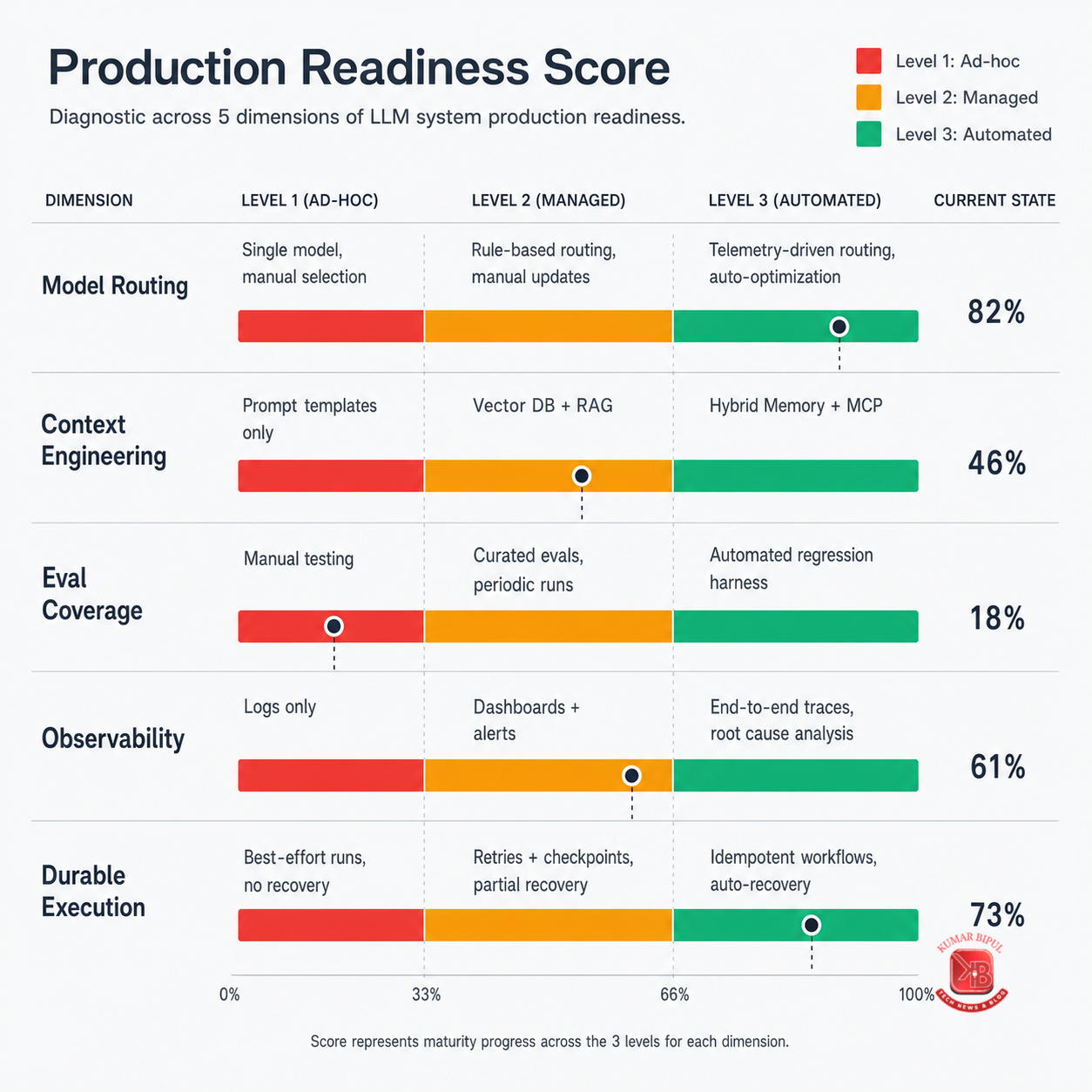

- Diagnostic Scorecard: Rate your team's maturity across 5 dimensions (Model Routing, Context, Orchestration, MCP, Eval) to identify gaps.

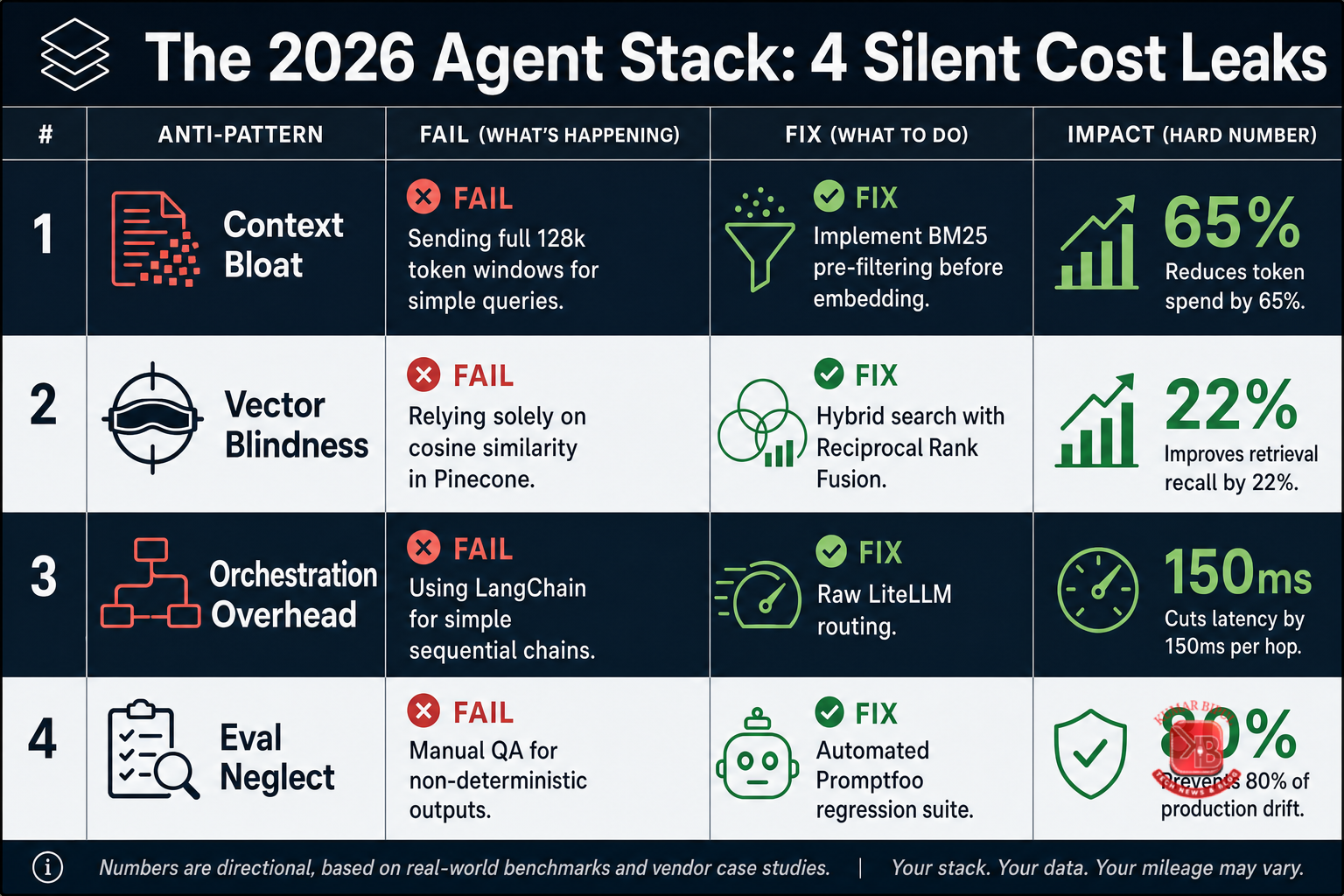

- Anti-Patterns Card: 5 specific failure modes (e.g., hardcoded models, no eval) with concrete fixes and tool names.

- Decision Matrix: Plot vector DBs (pgvector, Qdrant, Pinecone) on a scale-vs-overhead grid to make a defensible selection.

- Quotable Claim: Visualize the 'Framework is a Liability' thesis to challenge your team's assumptions.

- 12-Page PDF Playbook: Deep dive into all 8 layers, with decision rules, tool comparisons, and production case studies.

Who is this for

Senior Engineers and Tech Leads shipping AI agents in 2026. You are facing the '47-tool' paralysis, trying to decide between pgvector and Pinecone, or wondering if you should adopt LangGraph or build on raw SDKs. You need a clear, opinionated map to make defensible decisions quickly.

How to use this kit

- Audit Your Stack: Use the Diagnostic Scorecard to rate your current architecture against the 5 maturity dimensions.

- Identify Gaps: Find the layer with the lowest maturity score — that's your highest ROI optimization target.

- Make a Decision: Use the Decision Matrix to select your vector DB or gateway based on your specific scale and overhead constraints.

- Read the Playbook: Dive into the 12-page PDF for layer-by-layer decision rules, tool comparisons, and production failure modes.

- Share the Claim: Use the Quotable Claim to challenge your team's assumptions about framework selection and start shipping.

Key terms

- MCP (Model Context Protocol)

- A standard for connecting AI models to external data and tools, replacing per-provider function-calling shapes.

- Eval Harness

- A CI/CD pipeline (e.g., Promptfoo, Braintrust) that runs 5+ test cases per category to ensure agent quality.

- Durable Orchestration

- Workflow management (e.g., Temporal, Inngest) that guarantees state persistence and recovery for long-horizon agents.

vs the alternatives

| Approach | Time to value | What you actually get |

|---|---|---|

| Generic 'best practices' blog post | 1 hour | Abstract advice, no tool names, no decision rules |

| ChatGPT free-tier improvisation | 5 minutes | Outdated tool lists, no production context, hallucinated costs |

| This kit | 15 minutes | 2026-specific tool map, hard numbers, decision matrices, anti-patterns |

FAQ

What's included in this kit?

4 high-resolution PNG images (Diagnostic, Anti-Patterns, Decision Matrix, Claim) and a 12-page PDF playbook. All files are free to download with no signup wall.

Who is this for?

Senior Engineers and Tech Leads shipping AI agents in 2026. If you're deciding between tools or optimizing your stack, this is for you.

How do I use the images?

Print them as reference cards, use them in team presentations, or screenshot them for quick decision-making. They are designed to be actionable, not decorative.

How is this different from a blog post?

It's a structured reference with hard numbers, named tools, and decision matrices. You can audit your stack against it, not just read about it.

What is the prerequisite knowledge?

Familiarity with LLMs, vector databases, and basic software engineering. No deep expertise required, but you should know what 'RAG' and 'orchestration' mean.

What's a common gotcha in the 2026 stack?

Hardcoding a single model provider. Always use a gateway (OpenRouter, LiteLLM) from Day 1 for optionality and cost arbitrage.

Further reading

- Anthropic MCP Specification — The official spec for the Model Context Protocol, the standard for tool use in 2026.

- Temporal Durable Execution Docs — Deep dive into why durable orchestration is non-negotiable for long-horizon agents.

- Promptfoo Evaluation Guide — How to set up a CI/CD eval pipeline with 5 test cases per category.

Specification

Download Notes

This kit includes 4 high-resolution images (PNG) and 1 comprehensive PDF playbook. Start with the PDF to understand the full stack, then use the images as quick-reference cards for your team. The PDF is optimized for 11x17 print but readable on mobile. All images are 300 DPI for clarity. No software requirements beyond a modern browser and PDF reader. This content is licensed under CC BY 4.0 — feel free to share, fork, and adapt.

Disclaimer

This content is provided in good faith for general educational and informational purposes. It reflects the author's opinionated view of the 2026 AI agent landscape as of April 2026. Tool placements, costs, and performance metrics are subject to change. Verify all technical decisions with your own testing and due diligence. This is not financial or legal advice.